Nick Bostrom and the Trilemma That Started It All

In 2003, philosopher Nick Bostrom proposed the simulation argument suggesting that if a civilization becomes capable of creating conscious simulations, it could generate so many simulated beings that a randomly chosen conscious entity would almost certainly be in a simulation. The argument was formal, probabilistic, and deliberately uncomfortable. It didn’t claim the universe is a simulation. It claimed that if posthuman civilizations tend to run such simulations at scale, the math strongly favors our being inside one.

The paper argues that at least one of the following propositions is true: the human species is very likely to go extinct before reaching a posthuman stage; any posthuman civilization is extremely unlikely to run a significant number of simulations of their evolutionary history; or we are almost certainly living in a computer simulation. The structural elegance of this trilemma is what made it stick. You can reject the conclusion, but only by accepting one of the other unsettling alternatives. Bostrom himself explicitly stated that “simulating the entire universe down to the quantum level is obviously infeasible, unless radically new physics is discovered.” That caveat is important. The hypothesis doesn’t require a perfect universe-wide rendering. It only requires enough fidelity to sustain convincing experience.

Seth Lloyd and the Universe’s Computational Budget

Merely by existing, all physical systems register information. By evolving dynamically in time, they transform and process that information. The laws of physics determine the amount of information that a physical system can register and the number of elementary logic operations that a system can perform. This wasn’t a poetic claim. It was the premise for a precise calculation by MIT physicist Seth Lloyd, one of the world’s leading experts in quantum computing.

All physical systems register and process information. The laws of physics determine the amount of information that a physical system can register and the number of elementary logic operations that a system can perform. The universe is a physical system. The amount of information that the universe can register and the number of elementary operations it can have performed over its history are calculated. The universe can have performed roughly 10 to the power of 120 operations on 10 to the power of 90 bits. Those numbers are staggering, yet still finite. Every physical system registers information, and just by evolving in time, by doing its thing, it changes that information, transforms that information, or processes that information. Lloyd’s framing treats the cosmos as a giant quantum computer running within hard physical limits. The implication is clear: if the universe has a computational budget, it can, in principle, be spent.

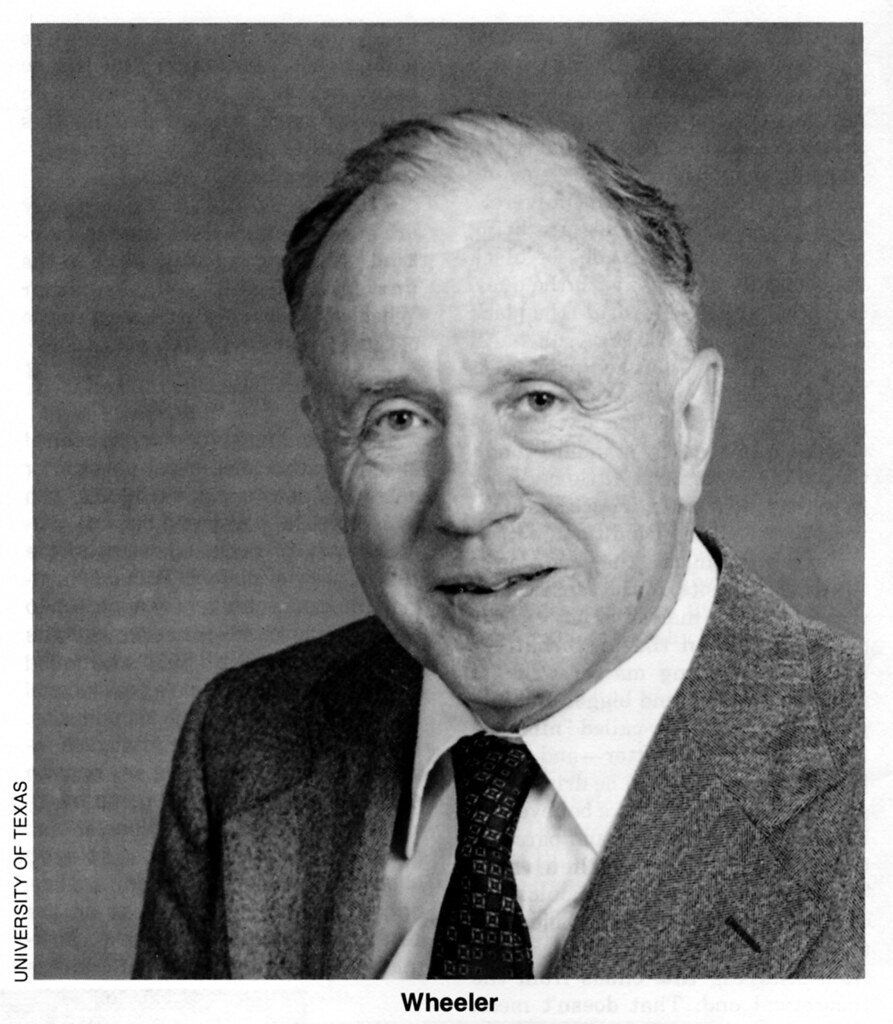

John Archibald Wheeler and “It from Bit”

Wheeler is a legendary figure in physics. He worked with Niels Bohr to explain nuclear fission, worked on the hydrogen bomb at Los Alamos, and taught many eminent physicists including Richard Feynman, Kip Thorne and Hugh Everett. He was the father of modern general relativity, was key in developing our understanding of black holes, and coined terms including “wormhole” and “quantum foam.” Wheeler categorised his long and productive life in physics into three periods: “Everything is Particles,” “Everything is Fields,” and “Everything is Information.”

In 1990, Wheeler suggested that at the smallest scale, physics is binary. The amount of information needed to describe the universe is not infinite but ultimately limited to binary choices. According to this “it from bit” concept, all things physical are information-theoretic in origin. Every particle, every field of force, even the spacetime continuum itself, derives its function, its meaning, its very existence from the apparatus-elicited answers to yes-or-no questions, binary choices, bits. This was a radical reframing. Reality, in Wheeler’s view, doesn’t just produce information as a byproduct. Information is what reality is made of. Wheeler was one of the first prominent physicists to propose that reality might not be wholly physical, and that in some sense the cosmos must be a “participatory” phenomenon requiring the act of observation. If that view is correct, then the universe doesn’t just resemble a computation. It is one.

Max Tegmark and the Mathematical Universe

Max Tegmark, a cosmologist at MIT, took Wheeler’s information-first intuition and pushed it further. His Mathematical Universe Hypothesis, developed through the early 2000s and formally published in a 2007 paper in Foundations of Physics, proposes that the universe is not merely described by mathematics. It is a mathematical structure. Every entity, every law, every physical constant is a feature of an abstract mathematical object that simply exists. Observers are self-aware substructures within that object.

The connection to computational limits is direct. If the universe is a mathematical structure, then its behavior is, at least in principle, computable. And if it’s computable, then questions about processing speed, memory capacity, and resolution become meaningful. Tegmark’s framework implies that physical constants and the apparent discreteness of quantum states aren’t arbitrary features but structural necessities of the particular mathematical object we inhabit. Some theorists have drawn an analogy: just as a video game has a polygon count and a rendering distance, our universe may have hard limits on resolution. The Planck length, at roughly 1.6 times 10 to the minus 35 meters, is often cited as the smallest meaningful unit of space. Whether it represents a true physical floor or something more computational remains genuinely open.

Silas Beane and the Search for a Lattice

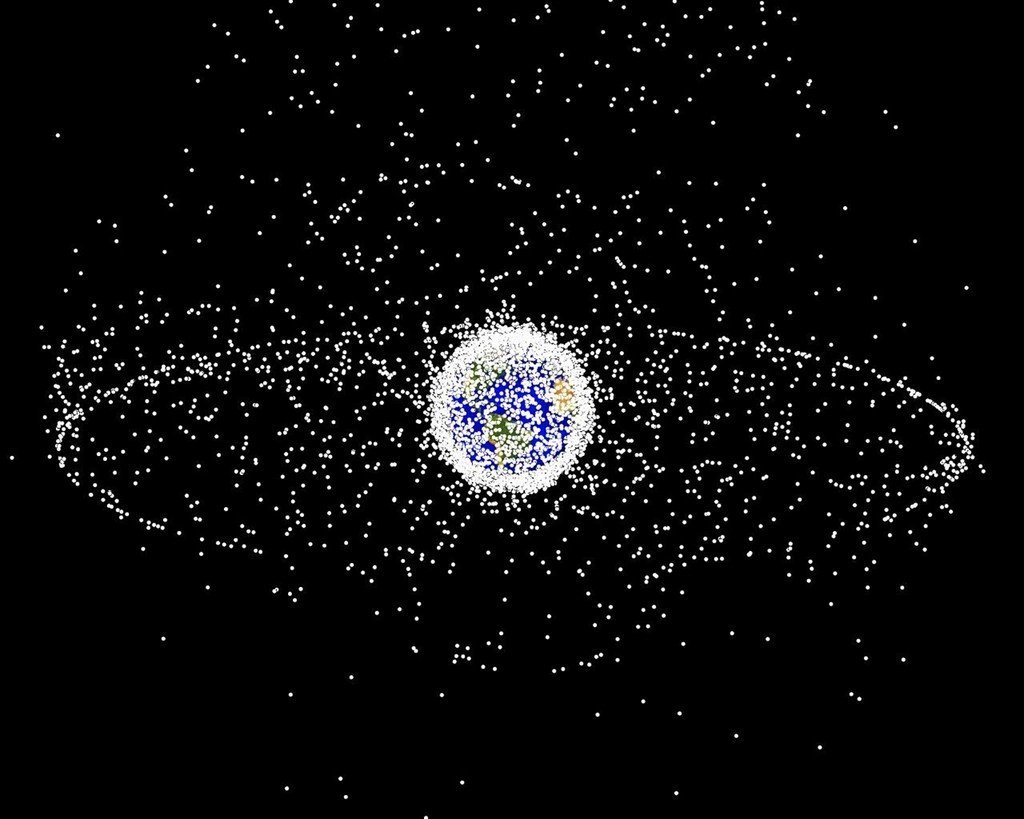

Observable consequences of the hypothesis that the observed universe is a numerical simulation performed on a cubic space-time lattice or grid were explored by Beane and colleagues. The simulation scenario was first motivated by extrapolating current trends in computational resource requirements for lattice QCD into the future. This was a meaningful step. Rather than treating the simulation hypothesis as untestable philosophy, Beane’s team looked for physical signatures that a discrete computational substrate would actually leave behind.

The numerical simulation scenario could reveal itself in the distributions of the highest energy cosmic rays exhibiting a degree of rotational symmetry breaking that reflects the structure of the underlying lattice. Think of it like pixelation in an image. At low resolution, patterns in the underlying grid start to show up as anomalies. Under the assumption of finite computational resources, the simulation of the universe would be performed by dividing the space-time continuum into a discrete set of points, which may result in observable effects. In analogy with the simulations that lattice-gauge theorists run today to build up nuclei from the underlying theory of strong interactions, several observational consequences of a grid-like space-time have been studied. Among proposed signatures is an anisotropy in the distribution of ultra-high-energy cosmic rays that, if observed, would be consistent with the simulation hypothesis. No such definitive anisotropy has been confirmed to date, but the approach remains one of the few concrete experimental frameworks the hypothesis has produced.

Where the Science Actually Stands

New research from UBC Okanagan has mathematically demonstrated that the universe cannot be simulated. Using Gödel’s incompleteness theorem, scientists found that reality requires “non-algorithmic understanding,” something no computation can replicate. Published in 2025, the work by Mir Faizal and colleagues represents the most recent serious challenge to the computational view of reality. The authors demonstrated that even an information-based foundation cannot fully describe reality using computation alone. They used powerful mathematical theorems, including Gödel’s incompleteness theorem, to prove that a complete and consistent description of everything requires what they call non-algorithmic understanding.

On the other side, a 2025 paper by astrophysicist Franco Vazza examined the energy requirements for simulating the universe and found them to be staggering, even for a reduced-resolution version. Vazza characterized the simulation hypothesis as a “physically unrealistic” proposal on the grounds of the computational resources required to calculate physical reality. He mathematically demonstrated that such calculations are not achievable given the computational power required if the simulator’s universe is governed by physics like our own. Yet critics of that critique have pointed out that critiques based on the assumption that a simulation must be fully comprehensive do not engage the simulation hypothesis as originally formulated, and Bostrom himself later clarified that such objections miss the point. Meanwhile, a mathematical framework introduced in late 2025 by SFI Professor David Wolpert challenged the popular belief that deeper levels of simulation must be computationally weaker than the levels above them. Wolpert showed that this isn’t required by the mathematics: simulations do not have to degrade, and infinite chains of simulated universes remain fully consistent within the theory.

The honest summary is this: the simulation hypothesis has never been confirmed by experiment, and recent mathematical work suggests serious obstacles to it ever being true in the strict sense. The “simulation hypothesis” is a radical idea which posits that our reality is a computer simulation, and assessing how physically realistic it is, based on physical constraints from the link between information and energy, remains an active area of inquiry. What the debate has genuinely produced, though, is a richer understanding of how information, energy, and physical law are related. The physicists who explored it weren’t chasing science fiction. They were asking one of the oldest questions in new language: why does the universe have limits at all, and what do those limits mean?