The Scale of the Target: A Rapidly Growing Genetic Database Economy

The sheer volume of genetic data now sitting in commercial databases is staggering. The global direct-to-consumer genetic testing market was valued at roughly four billion dollars in 2024, and the trajectory is only heading upward. The market was estimated at close to two billion dollars in 2023, with projections already showing it reaching over two billion dollars in 2024. That growth curve represents tens of millions of people willingly handing over the most permanent data they’ll ever generate.

More than twenty-six million people in the U.S. have already taken DNA tests, and that number keeps climbing as tests become cheaper and more accessible. Ancestry alone operates the world’s largest consumer DNA network, with a user base exceeding twenty million people. From a hacker’s perspective, that’s not a customer base. That’s a target-rich environment of immutable, highly personal data – the kind that never expires and can never be reset.

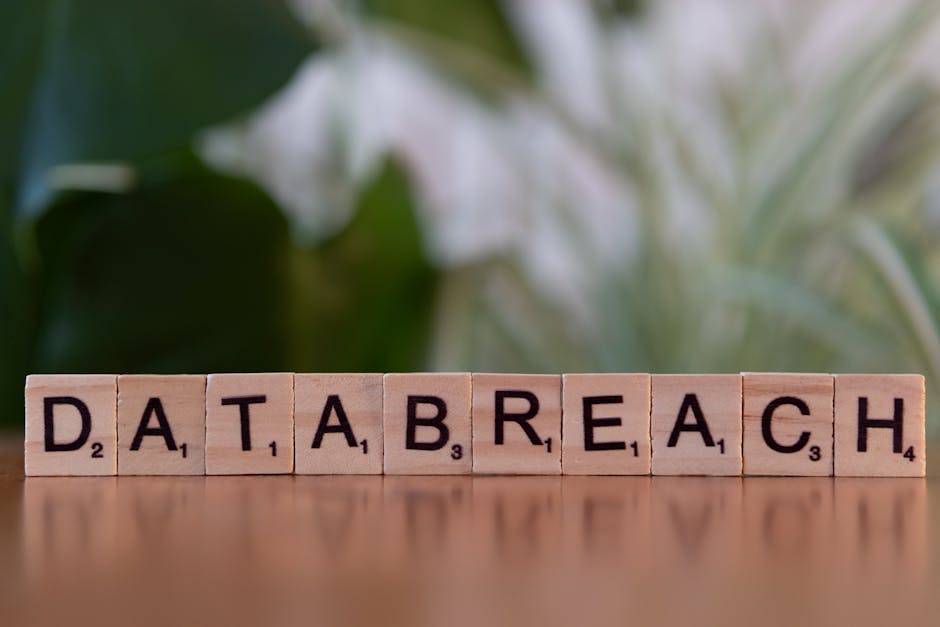

The 23andMe Breach: A Case Study in What’s at Stake

Through a relatively small number of compromised accounts, a hacker obtained the data of around 6.9 million users – a breach that first came to light in October 2023 when the attacker claimed in an online forum to have the profile information of millions of 23andMe users. The attack itself was almost disarmingly simple. The breach resulted specifically from a credential stuffing attack, a method where cybercriminals use automated tools to try stolen username-password pairs from other breaches on different platforms – a type of attack that often exploits sites lacking two-factor authentication.

The information stolen included names, profile photos, birth years, locations, family surnames, grandparents’ birthplaces, ethnicity estimates, mitochondrial DNA haplogroup, and Y-chromosome DNA haplogroup. What makes this especially alarming is that at the time of the breach, less than twenty-two percent of 23andMe customers had opted into multi-factor authentication, meaning that for more than three-quarters of users, their password was the only control protecting access to their account. One batch of data was advertised on a hacking forum as a list of Ashkenazi Jews, and another as a list of people of Chinese descent, sparking concerns about targeted attacks. The breach also had severe financial consequences for 23andMe itself: the breach severely affected 23andMe’s reputation and caused the company to declare bankruptcy on March 23, 2025.

Why Genetic Data Is the Ultimate Hacker Prize

Most stolen data has a shelf life. Credit card numbers get frozen. Social Security numbers get flagged. Even stolen email passwords lose value once changed. Genetic data doesn’t age. Unlike most forms of personal data, genetic information is permanent – you can change a password, but not your DNA. That makes it especially important to handle carefully, because how it’s collected, stored, or shared can affect not just one person, but their entire family or community.

The combination of personal information that could be found in genetic accounts – such as postcodes, race, ethnic origin, familial connections, and health data – could potentially be exploited by malicious actors for financial gain, surveillance, or discrimination. There’s also a dark-market premium attached to it. A single stolen medical record sells for roughly two hundred sixty to three hundred ten dollars – ten times the value of a stolen credit card. Genetic data, with its additional depth and permanence, sits at the top of that value hierarchy. Medical data can’t be changed – if a fraudster gets your credit card, you cancel it. But if they get your medical history, your Social Security number, your birth date, or your insurance ID, you can’t reset those, making medical data a long-term asset for attackers.

From Stolen DNA to Digital Twins: How the Threat Evolves

The concept of a “digital twin” has legitimate uses in manufacturing, engineering, and healthcare – essentially a dynamic virtual replica of a real-world system. A digital twin can also be a duplicate of a person such as an employee or a persona, a digital representation of an individual entity, and it is not static: it takes in the same data – often supplied in real time – that its real-world twin does and changes accordingly. In the wrong hands, this same concept becomes something far more dangerous.

Trend Micro’s 2025 predictions report warned of the potential for malicious “digital twins,” where breached or leaked personal information is used to train a large language model to mimic the knowledge, personality, and writing style of a victim. When deployed in combination with deepfake video and audio and compromised biometric data, they could be used to commit identity fraud or to “honeytrap” a friend, colleague, or family member. Genetic data, with its ethnic background, health predispositions, and biological markers, adds an entirely new layer of credibility to these simulations. A hacker could create a digital twin of an existing persona and “insert it into your environment and then watch and participate in your organization and then inject malware into the ecosystem.”

The Re-Identification Problem: Anonymized Data Isn’t Really Anonymous

Many genetic testing companies reassure their users that research data is anonymized before it is shared with third parties. The science of genomics, however, tells a more complicated story. The removal of protected health information cannot protect from re-identification. Researchers have consistently demonstrated that combining seemingly anonymous genetic datasets with other publicly available information can reliably identify individuals. This isn’t a theoretical risk. It’s a documented reality.

The Genetic Information Nondiscrimination Act of 2008 provides protections against discrimination by health insurers or employers on the basis of genetic information, but it does not clearly define what information needs protection nor how such protection is carried out. Direct-to-consumer companies occupy a further gray zone: DTC companies are typically not engaged in providing healthcare services, and are thus not legally required to comply with HIPAA. That creates a significant gap – millions of people’s genetic profiles being held by companies that carry fewer legal obligations than a standard hospital clinic. The combination of re-identification techniques and weakly regulated databases is what makes stolen genetic data genuinely dangerous in the era of AI-assisted fraud.

AI-Powered Fraud and the Synthetic Identity Machine

Generative AI has fundamentally changed what a determined bad actor can do with stolen personal data. Generative AI accelerates identity fraud by reducing the cost of producing convincing deception and compressing the attacker learning cycle – failed attempts can be iterated into more effective ones quickly, then reproduced across many targets and workflows. Genetic data feeds directly into this system by providing ethnic background, biological age markers, predispositions, and family linkages that make a synthetic profile far more convincing.

Synthetic identities were used in roughly one in five first-party frauds detected in 2025 – composite identities created from a potent cocktail of real and fabricated data that can fool many identity verification systems. Sophisticated fraud almost tripled in 2025, with advanced fraud attempts surging from about ten percent in 2024 to twenty-eight percent by the end of 2025 – a nearly one hundred eighty percent increase. Nation-state actors are already operating at scale: one North Korean nation-state software development team created at least one hundred thirty-five synthetic identities, scraping images and personal data, generating fake passports, and automating email and professional networking accounts to generate leads, in what researchers described as a complex multistage process to generate synthetic identities at scale.

The Financial Cost of Getting It Wrong

When genetic and health data breaches occur, the financial consequences extend well beyond the affected individuals. The healthcare industry suffers the highest average breach costs of any sector, at nearly eleven million dollars per incident. Healthcare data breaches typically take over two hundred days before discovery – longer than the average across other industries. That extended exposure window gives attackers time to exploit, resell, and weaponize data long before anyone realizes a breach even occurred.

23andMe agreed to a thirty million dollar settlement in September 2024 to resolve its consolidated litigation, with the settlement receiving final court approval on January 30, 2026, after being moved into bankruptcy court following the company’s March 2025 bankruptcy filing. A joint investigation by Canada’s Privacy Commissioner and the UK’s Information Commissioner’s Office resulted in 23andMe being fined £2.31 million for failing to protect genetic data. The financial arithmetic is sobering: breach costs, legal settlements, regulatory fines, and reputational damage combined make genetic data security a commercial survival issue, not just a privacy concern.

Regulation and the Gap That Needs Closing

The regulatory landscape around genetic data remains patchy at best. A joint investigation by Canadian and UK regulators concluded that 23andMe had failed to implement appropriate security measures, including mandatory multi-factor authentication and adequate password requirements, and that the company’s detection systems had missed clear warning signs of the credential stuffing attack for months. That kind of institutional failure doesn’t happen in a vacuum – it reflects an environment where the rules are still being written and accountability mechanisms are still being built.

A patchwork of state laws governing DNA data makes the genetic data of millions potentially vulnerable to being sold off, or even mined by law enforcement. Consumer protection in this space varies dramatically from country to country and state to state, and many of the companies holding the most sensitive biological data on earth operate under terms of service rather than hard regulatory floors. The law requires organizations to take proactive steps to protect themselves against cyberattacks, with guidance recommending multi-factor authentication wherever possible – particularly when sensitive personal information is being collected – along with regular vulnerability scanning and prompt security patching. The distance between where many companies currently stand and those requirements remains uncomfortably wide.

Conclusion: The Data You Can Never Take Back

Genetic data occupies a category entirely its own. It is simultaneously a health record, a family tree, an ethnic profile, a biological fingerprint, and a permanent identifier – all in one file. The convergence of large commercial DNA databases, AI-powered fraud tools, and insufficient regulatory protection has created conditions where the risks are not hypothetical. They’re measurable, demonstrable, and growing.

The 23andMe collapse, the proliferation of synthetic identity fraud, and the demonstrated ability to re-identify people from supposedly anonymous genetic records all point in the same direction. Genetic privacy is not a niche concern for bioethicists. It’s a mainstream security issue that touches anyone who has ever spit into a tube and mailed it to a company that promised to tell them who they are. The uncomfortable truth is that in doing so, they may have also told a great deal to people they never intended to meet.