A Shocking New Era: Crime Stopped Before It Starts?

Imagine a world where crimes are prevented before anyone even thinks of committing them. It sounds like something out of a sci-fi movie, yet it’s happening right now, powered by artificial intelligence. Predictive policing uses AI to analyze mountains of data, from past crime reports to social media chatter, all in the hope of stopping trouble before it even begins. This idea is both inspiring and unsettling. It promises safety, but at what cost? The emotional tug-of-war between fear and hope is real, and the stakes could not be higher for our communities.

How AI is Rewriting the Rules of Policing

AI is like a supercharged detective that never sleeps. By crunching numbers and spotting patterns, it can help police departments figure out where and when crimes are most likely to happen. These AI systems sift through everything—crime logs, weather changes, even community events—to predict hotspots of criminal activity. Officers then use this information to patrol certain neighborhoods more closely or respond faster to emerging threats. The speed and accuracy of AI can be mind-blowing, but it also raises tough questions about who gets watched and why. Is this the future we want, or is it a slippery slope?

When Technology Inherits Our Biases

The promise of AI is that it’s neutral, but in reality, it’s only as fair as the data we feed it. If the historical crime data is skewed—maybe because certain neighborhoods were policed more aggressively in the past—the AI learns to target those same places again. This can trap entire communities in a cycle of suspicion and surveillance, even if they’ve done nothing wrong. It’s a bit like teaching a dog to fetch, but only ever throwing the ball in one direction. Some people call this “digital redlining,” and it’s a problem that can’t be ignored if we want justice to mean something.

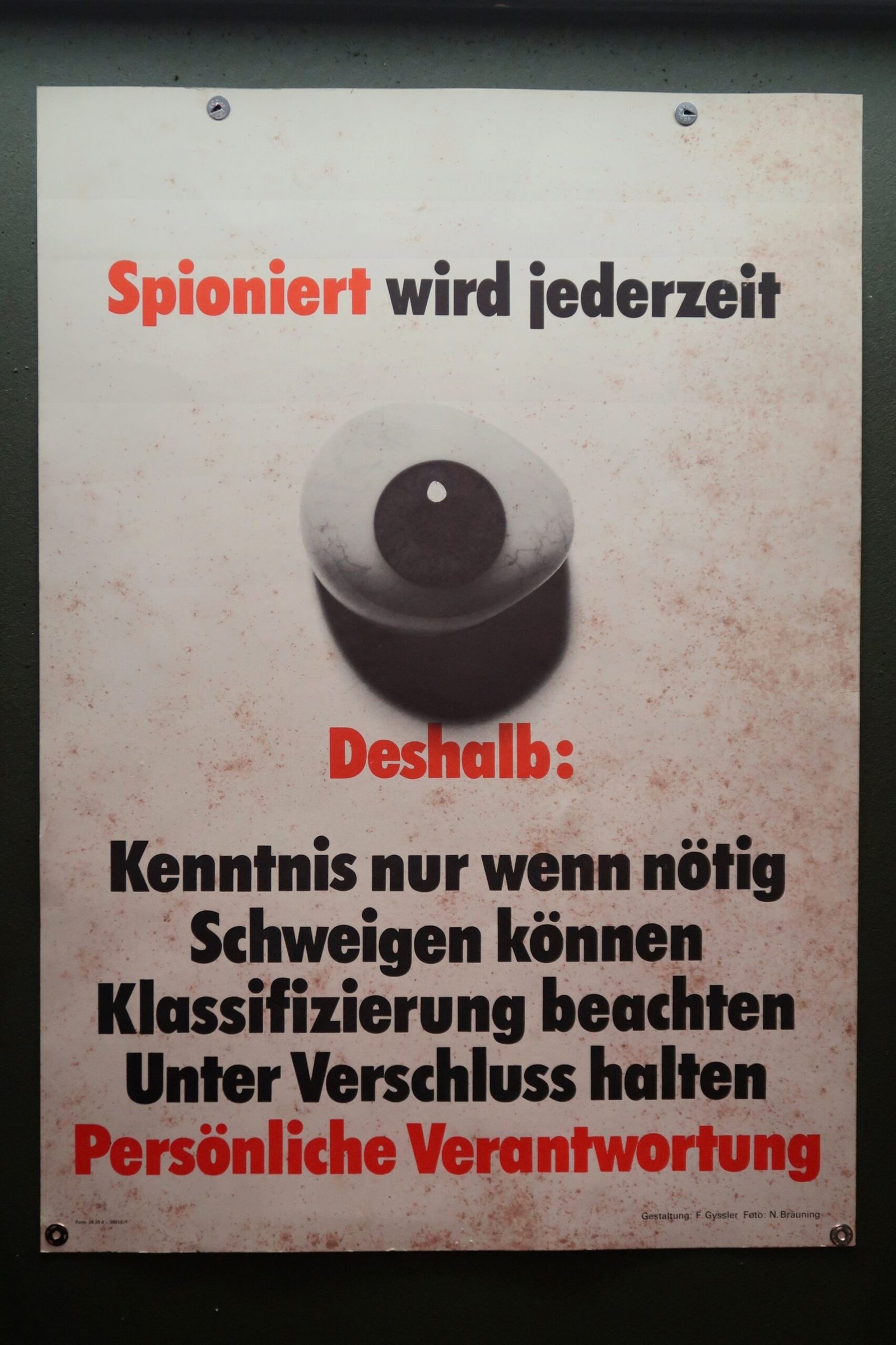

Is Your Privacy the Price of Safety?

Every time you post online, walk down a street with cameras, or swipe your metro card, you’re leaving digital breadcrumbs behind. Predictive policing often means collecting all these breadcrumbs and piecing together a picture of where crime might brew next. But who decides what data gets collected, and what if it’s used without your knowledge? The idea that someone, somewhere, is watching your moves can feel downright creepy. People worry that their ordinary actions could be misread by an algorithm, leading to more surveillance or unnecessary attention from the police.

Who’s Really Responsible When AI Points the Finger?

It’s easy to blame a computer when things go wrong, but AI doesn’t work in a vacuum. When an algorithm wrongly predicts that a certain area is dangerous, who is held accountable for the consequences? Is it the software engineers, the police, or the city officials who approved the system? The lack of clear responsibility can make it difficult for people who feel unfairly targeted to get answers—or justice. This gray area is a breeding ground for mistrust, and it raises the unsettling possibility that nobody is truly in charge.

Communities on Edge: The Human Cost

When predictive policing zeroes in on a neighborhood, the people living there feel it—sometimes in ways outsiders can’t see. More police cars, more stops, and more questions can make ordinary life feel tense and stressful. For families just trying to get by, this constant presence can feel like a spotlight they never asked for. Over time, it erodes the trust between residents and law enforcement, making it harder for police to do their jobs and for communities to feel safe in their own homes.

Walking the Tightrope: Public Safety vs. Civil Liberties

There’s no doubt that stopping crime before it happens could save lives, but at what cost to our freedoms? Balancing the need for security with respect for civil liberties is like walking a tightrope blindfolded. On one side, there’s the promise of safety; on the other, the risk of losing privacy and autonomy. Policymakers have to make tough choices about where to draw the line, and those choices can shape the future of law enforcement for decades to come. It’s a debate that stirs strong feelings on both sides.

Why Oversight Can’t Be Optional

Regulation and oversight aren’t just bureaucratic red tape—they’re the safety rails that keep predictive policing from running off the tracks. Setting clear rules for how data is collected, stored, and used isn’t just good practice; it’s essential for maintaining public trust. Oversight bodies can help ensure that AI systems are tested for fairness and that their decisions can be explained in plain language. Without these guardrails, the risk of abuse grows, and so does the danger of communities losing faith in the very people meant to protect them.

Real-Life Lessons: Cities on the Front Lines

Cities like Los Angeles and Chicago have been testing grounds for predictive policing, with mixed results. In some cases, the technology helped police respond more quickly to crime spikes. But there have also been stories of neighborhoods feeling unfairly targeted, sparking protests and public outcry. These real-world examples show that technology alone can’t solve the deep-rooted issues in policing—it can sometimes make them worse. Looking at what’s worked, and what hasn’t, gives valuable clues for cities considering similar moves.

The Human Side of the Equation

Behind every data point is a person—a parent, a student, a worker—just trying to live their life. Predictive policing can sometimes turn these individuals into statistics, forgetting the messy, complicated reality of human behavior. It’s easy to trust a machine’s cold logic, but people aren’t just numbers on a spreadsheet. Policymakers and police officers need to remember the human stories behind the predictions, or risk losing sight of what really matters: dignity, fairness, and compassion.

Personal Reflections: Where Do You Draw the Line?

The debate over predictive policing isn’t just about technology or policy—it’s about values. As someone who’s watched this conversation unfold, I can’t help but wonder where I’d draw the line. Would I trade a bit of my privacy for a safer neighborhood, or does that open the door to something more sinister? It’s a tough call, and I don’t think there’s a one-size-fits-all answer. But I do know this: the questions we ask today will shape the future for all of us.