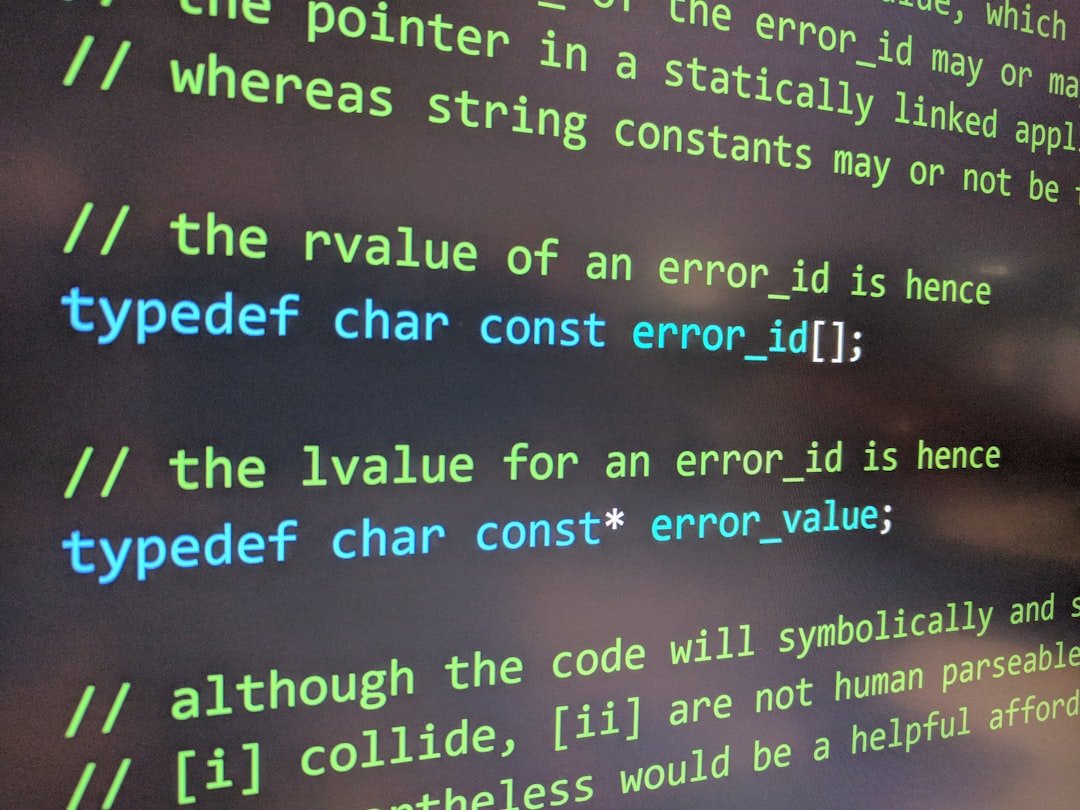

Unseen Malware in Magic Code (Image Credits: Unsplash)

Vibe coding emerged as a game-changer in software creation, enabling non-experts to build applications through simple natural language prompts to AI tools. OpenAI cofounder Andrej Karpathy popularized the term with a tweet in February 2025, sparking widespread experimentation across industries. While this approach lowers barriers to innovation, it introduces profound security and legal vulnerabilities that organizations ignore at their peril. Leaders now face the challenge of harnessing its power without compromising their defenses.

Unseen Malware in Magic Code

Picture a marketing team member crafting an interactive dashboard in minutes, only to unknowingly embed sophisticated spyware that siphons sensitive data. Vibe coding relies on AI models that assemble code from vast, unverified datasets, blending contributions from legitimate developers, rogue hackers, and even state actors. No one verifies the origins, leaving companies exposed to threats like data exfiltration or SQL injections that devastate databases.

Legal pitfalls compound the issue. Generated code might infringe copyrights or patents, exposing firms to lawsuits they never anticipated. Unlike traditional development, where experts scrutinize every line, vibe-coded programs harbor bugs with no clear architect to fix them. This opacity turns a productivity boon into a ticking time bomb for cybersecurity perimeters.

Elevate AI Risks to Boardroom Priority

Security lapses from vibe coding transcend IT departments, infiltrating finance, HR, sales, and beyond. Senior executives must treat it as a strategic imperative, not a technical footnote. Delegating solely to IT mirrors past cybersecurity oversights that proved costly.

Organizations that succeed integrate oversight at the highest levels. Cross-functional teams ensure AI use aligns with enterprise goals while flagging anomalies early. This shift demands cultural change, prioritizing vigilance over unchecked enthusiasm.

Weave Protection into Core Processes

Static policies gathering digital dust fail against rapid AI adoption. Proactive measures embed risk detection directly into workflows, scanning code for vulnerabilities in real time. Emerging tools quantify threats, flag malware patterns, and suggest remediations before deployment.

Consider these essential practices:

- Automate scans for every AI-generated output, prioritizing high-risk functions like data access.

- Enforce approval gates for production use, involving security reviews.

- Train teams on prompt engineering to minimize opaque generations.

- Log all AI interactions for audit trails.

- Simulate attacks on vibe-coded apps to expose weaknesses.

Adopting such integrated systems keeps pace with AI’s evolution, turning potential crises into managed routines.

Hold Providers and Experts Accountable

Vendors must disclose AI integration details, including risk assessments and real-time mitigation protocols. Standard questionnaires fall short; demand granular transparency on model training data and vulnerability handling. This accountability extends to contracts, making providers liable for undisclosed flaws.

A burgeoning ecosystem of specialists fills the expertise void. These firms offer tailored audits, compliance frameworks, and ongoing monitoring for vibe coding hazards. Engaging them early prevents reactive scrambles amid breaches. For deeper insights, resources like the NCSC warnings and community discussions on securing AI apps provide practical starting points.

| Risk Factor | Traditional Coding | Vibe Coding |

|---|---|---|

| Source Transparency | Tracked and vetted | Often unknown |

| Bug Accountability | Developer owned | AI diffused |

| Legal Exposure | Reviewed by legal | Latent IP issues |

Key Takeaways

- Vibe coding accelerates innovation but amplifies unknown threats from untraceable code sources.

- Treat AI security as an enterprise-wide concern, starting at the C-suite.

- Proactive tools and vendor demands are non-negotiable for sustainable adoption.

The vibe coding revolution promises boundless creativity, yet history warns that unchecked technological leaps invite disaster. Organizations thrive by balancing enthusiasm with rigorous safeguards, ensuring AI serves rather than sabotages. What steps is your team taking to tame these risks? Share your thoughts in the comments.